Project01: iVolume — a volume-controlled RPi Radio

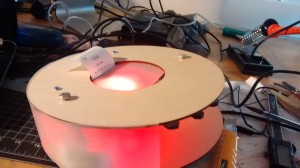

iVolume is a device that allows you to listen to your favorite radio station on Pandora, where the volume of the music is controlled based on how loud or quiet the surrounding environment is. As someone who loves listening to music on Pandora, I thought this would be an interesting way to use the input from the microphone, and a productive way to get my hands dirty with the Raspberry Pi.

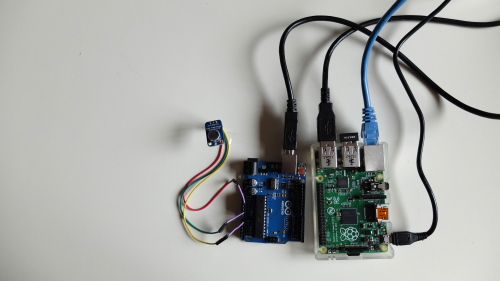

The video above has illustrated the basic working of the device. The electret microphone amplifier senses the crowd noise, where the data is interpreted and sent to the Raspberry Pi through Serial communication. Pianobar, an open-source, console-based client for Pandora, comes with many features including playing, managing, and rating stations. For this project, I used the input data to manipulate the volume of the music. In the video, I plugged in a set of speakers to the Raspberry Pi to illustrate how the device works. However, a more ideal setting would be to use headphones instead because the microphone will take in the loud sound from the speakers, which will eventually make the volume go up and never come down.

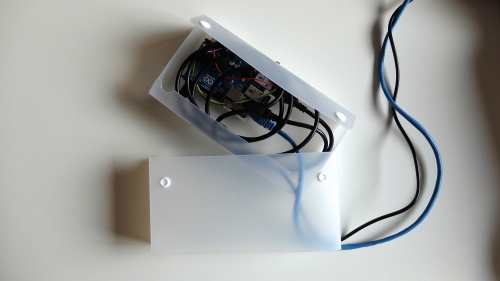

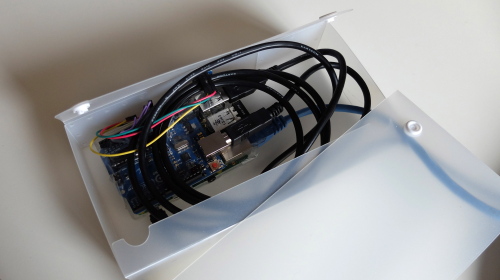

Even though there are quite a number of tutorials for Pianobar + Raspberry Pi, I learned a lot working on this project. This includes figuring out how to manually install the libraries onto the Raspberry Pi, working in terminal, sending data from an Arduino to a RPi, and coding in Python. Looking forward, I plan to create a more durable and portable prototype, and possibly mix in other types of inputs to manage the different stations.